FACTS ON 4 COMMON BIAS ABOUT FACE RECOGNITION

Is there a way to make sure that workers actually wear and use personal protective equipment (PPE) at hazardous production facilities? No big deal. Just train a neural network to recognize if PPE is on workers all over the facility, and then prevent accidents. How to do so? That’s what our today’s post is about.

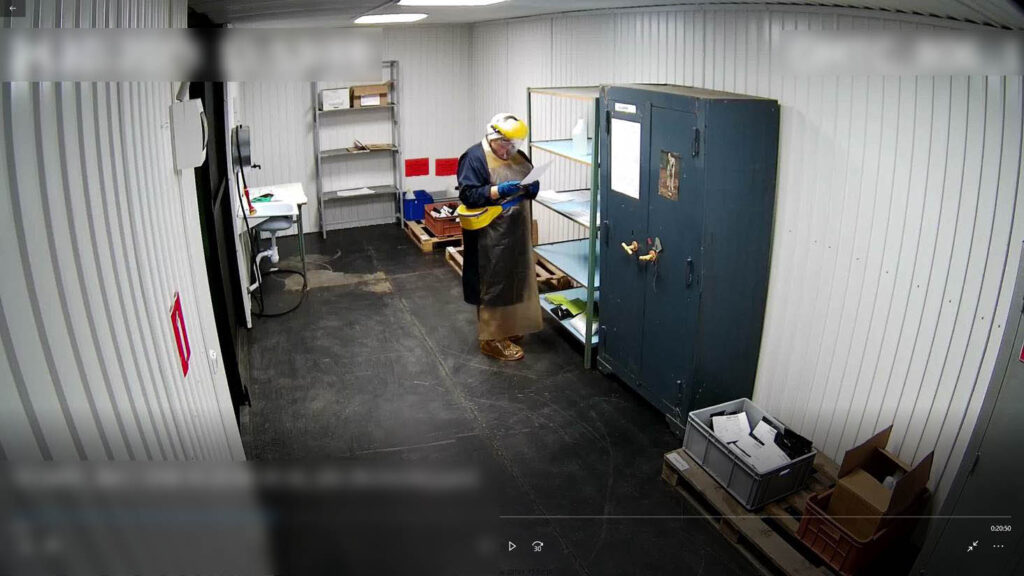

Is standard CCTV system effective? There are ordinary people behind the cameras, getting tired, distracted, having momentary lapses in concentration that could prove fatal for workshop personnel. The good news is that progress comes to save the day. A properly trained artificial intelligence detects safety practice violations at once and in no time alerts a worker, one way or another, e.g. with a sharp sound, vibration, or SMS.

We trained AI-based video analytics to monitor safety compliance in the workplace in multiple ways:

We called this platform for workplace accident prevention “Digital Worker“, and its video analytics tool can detect:

How does a Digital Worker IoT platform count people and know a worker’s job? It does so by means of digital differentiation, capable of seeing the color of safety wear or hard hat on a worker as one of the ways. Let’s take a drilling site for example, where each hard hat color depends on the job. Blue hard hats are worn by drilling engineers, green is found on heads of drilling supervisors, and so on. The site can have color video cameras. The number of hard hats counted in real time is equal to the number of workers. Each camera covers a specific area of a drilling site. At the end of the day, all this data is consolidated to make a schedule for every area, showing what workers did the job, how many of them and in what area.

The system recognizes dangerous situations and notifies the operator about them. So, it is forbidden to be near the drilling rig when it is in working condition. In case a worker comes closer to the forbidden area, camera notices it and sends an alert.

The system also reacts when foreign objects prevent machinery operation. This picture shows how an animal is detected on the production line:

Can you trick a neural network? Let’s say, a worker fastens a hard hat to a belt. What is going to happen? In the case like this, two detectors are involved to find a bone structure, check whether a color spot is on top of it, and look for objects that move in sync. Therefore, a hard hat on the belt will hardly “look around” a person, because to do so, it should be on the head that moves in a certain way. Such a move can be easily detected, so tricksters are caught on their way to break rules.

What else can a neural network see?

Digital Worker platform is a mix of IoT and AI technologies and tools for industrial enterprises and construction companies developed to ensure employee safety and improve performance with a focus on minimizing the number of accidents, preventing fraud and safety violations.

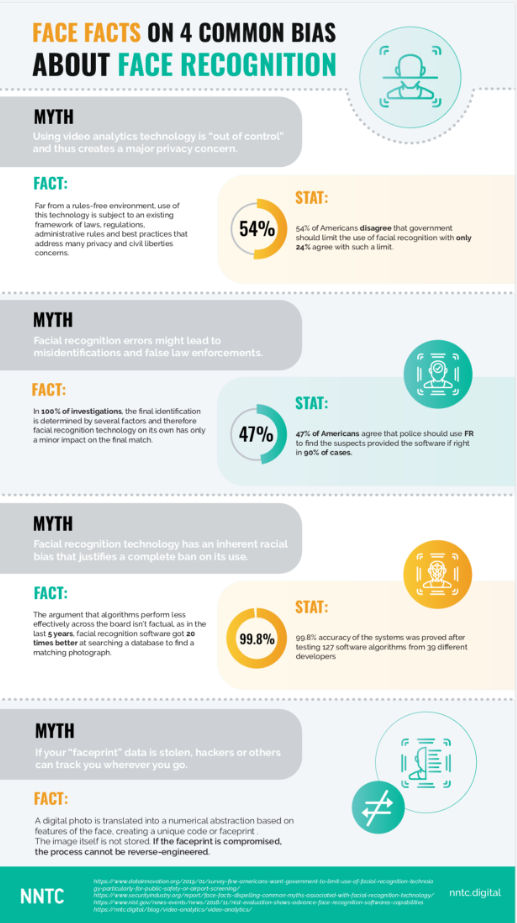

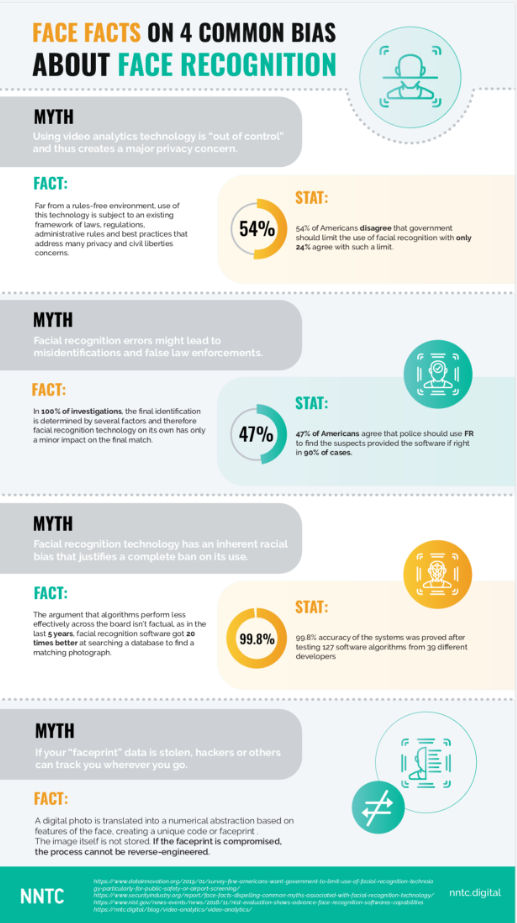

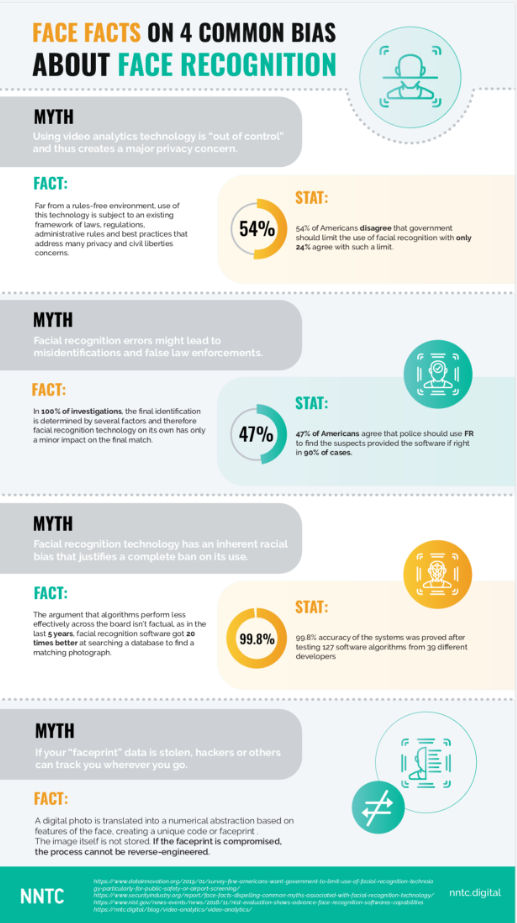

Video Analytics Myths, or Big Brother Is Watching You

Face recognition, one of the most demanded and flexible technologies, is gradually conquering more and more industries and entering your life, even if you don’t see it. As far as video analytics gains momentum, the true outbreak of superstitions, doubts, and myths makes the technology look rather controversial to laypersons. In this post, we’ll try to dispel some video analytics myths and answer some pertinent and compelling questions.Many of our customers worry that face recognition technology can be biased or prone to critical errors or can collect personal information. To confirm their concerns, they give examples of the technology misuse or software bugs. This post is to dispel the most persisting myths and evaluate actual risk.

Myth 1. Video analytics cannot be effective in a multinational country

What they say: The face recognition system cannot perform with the same efficiency when capturing people of different ethnic groups, which automatically renders it useless in multinational countries.What the facts say: The face recognition system, like any other artificial intelligence, needs learning. The more people pass through the system, the better it will identify key parameters. This is the responsibility of software developers whose carelessness aggravated by an undue rush may lead to conflicts detrimental to both company reputation and technology image.

Myth 2. Video analytics may discredit an honest person

What they say: The face recognition system may erroneously perceive an ordinary shop visitor as a criminal and make him/her suffer from consequences. That is why we may not rely on the technology in such critical matters!

What the facts say: Too often, these incidents are caused by the technology misuse by people installing and configuring the video surveillance systems. Here are key contributors to correct video analytics:

Myth 3. Cheating video analytics is easy

What they say: A violator can “deceive” a security system by putting on glasses, cultivating or shaving a beard, or hiding his/her face under a headband or hood. In real life, the system will fail to respond timely and recognize a violator.

What the facts say: Face recognition uses neural networks analyzing 54 points on a human face. The system will recognize a violator with up to 75% of his/her face covered, so that the presence or absence of a beard and dark glasses will not deceive it. Moreover, once the suspect is recognized, the system alerts security officers automatically, thus prevailing over ordinary surveillance systems in terms of both speed and reliability.

Myth 4. Using video analytics technology is something illegal

What they say: I am afraid that my employees or visitors will accuse me of invading their privacy or violating any rights. I don’t want they think of me as Big Brother controlling all aspects of their lives.

What the facts say: The face recognition technology does not invade people privacy. Using video analytics is permitted by law to ensure public safety, thus not violating but protecting rights of right-minded people. When integrating video analytics with customer servicing, you improve you service quality and convey personal message to every customer, just like Internet targeted ads work, but right here and now.

The video analytics technology employing AI, neural networks, and software code is nothing more than a basis which can be tailored to any business specifics and needs. Correct and ethical use of this technology is the responsibility of implementing companies, developers, and those who teach AIs. Face recognition is neither biased nor unfair technology in itself, and incidents are often due to its improper implementations or misconfiguration.