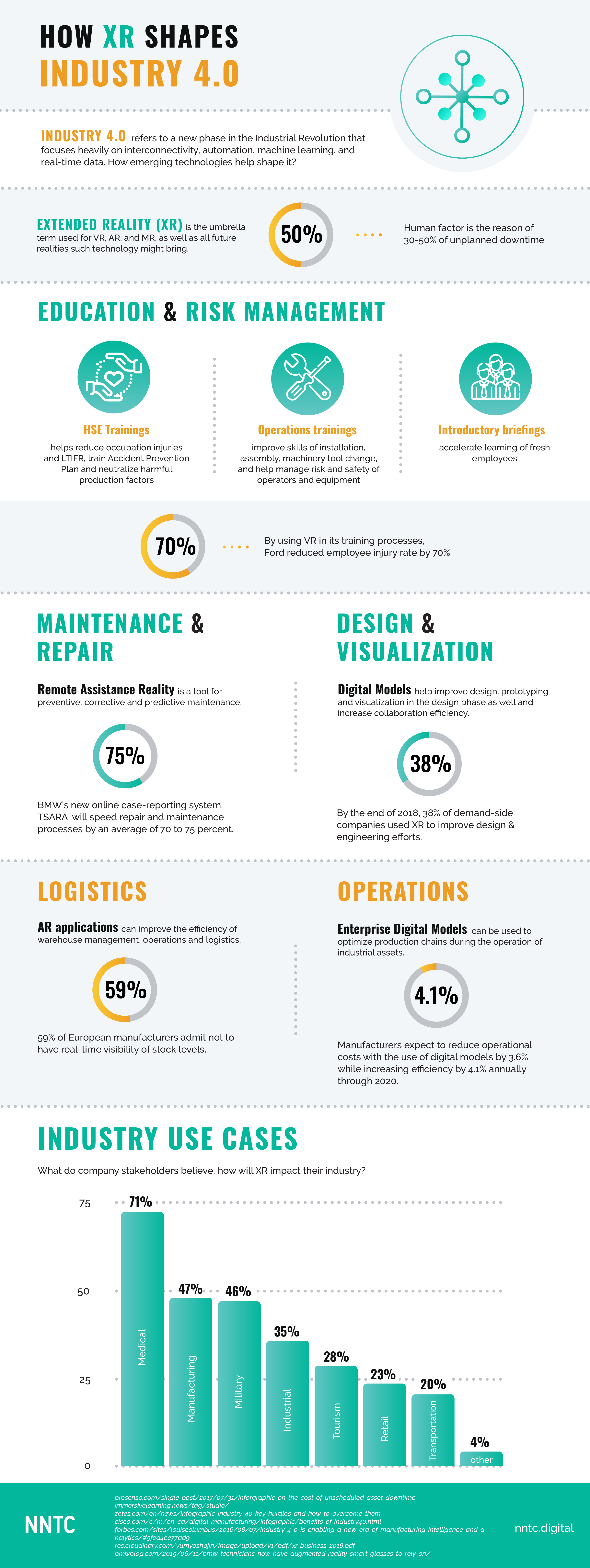

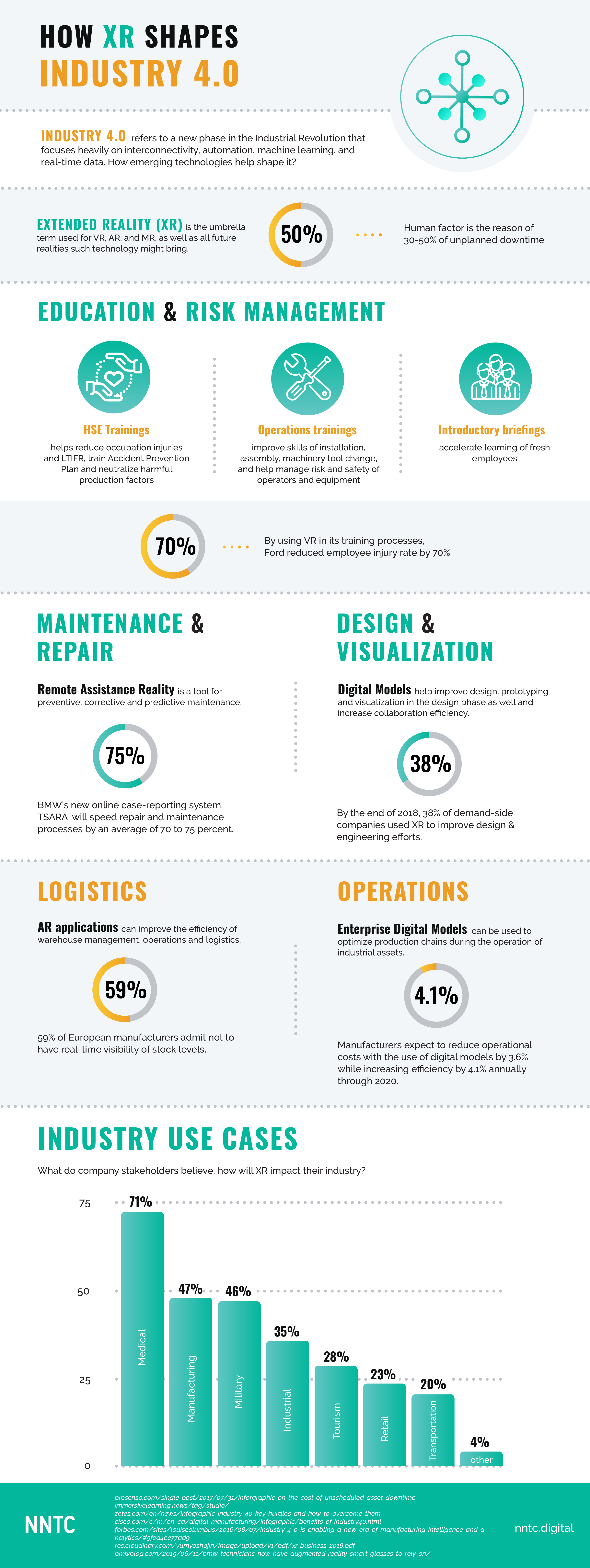

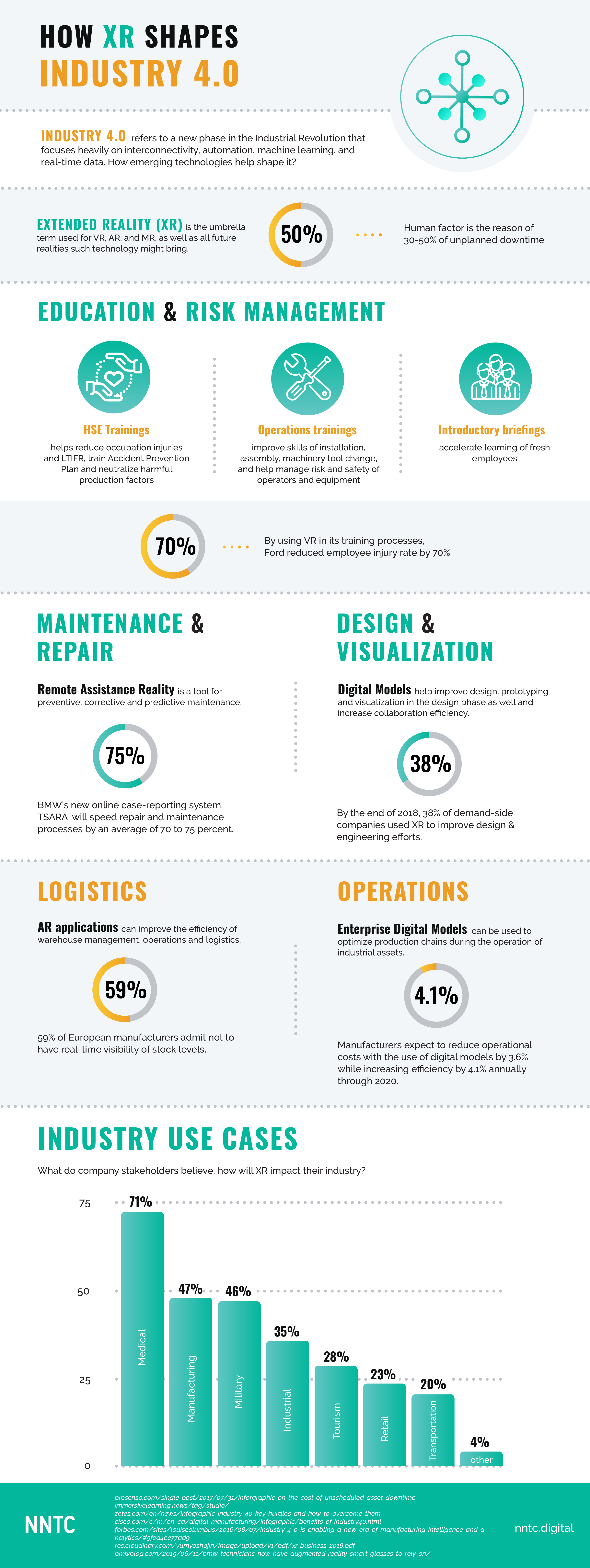

HOW XR SHAPES INDUSTY 4.0

Robotic Process Automation (RPA) is about optimizing individual processes and freeing up people for human-only tasks. Robots will never completely replace humans, that’s for sure, since feelings, emotions, and critical thinking are all beyond machines’ capability. However, robots excel at calculations and simple tasks – no hard choices or emotional investing.

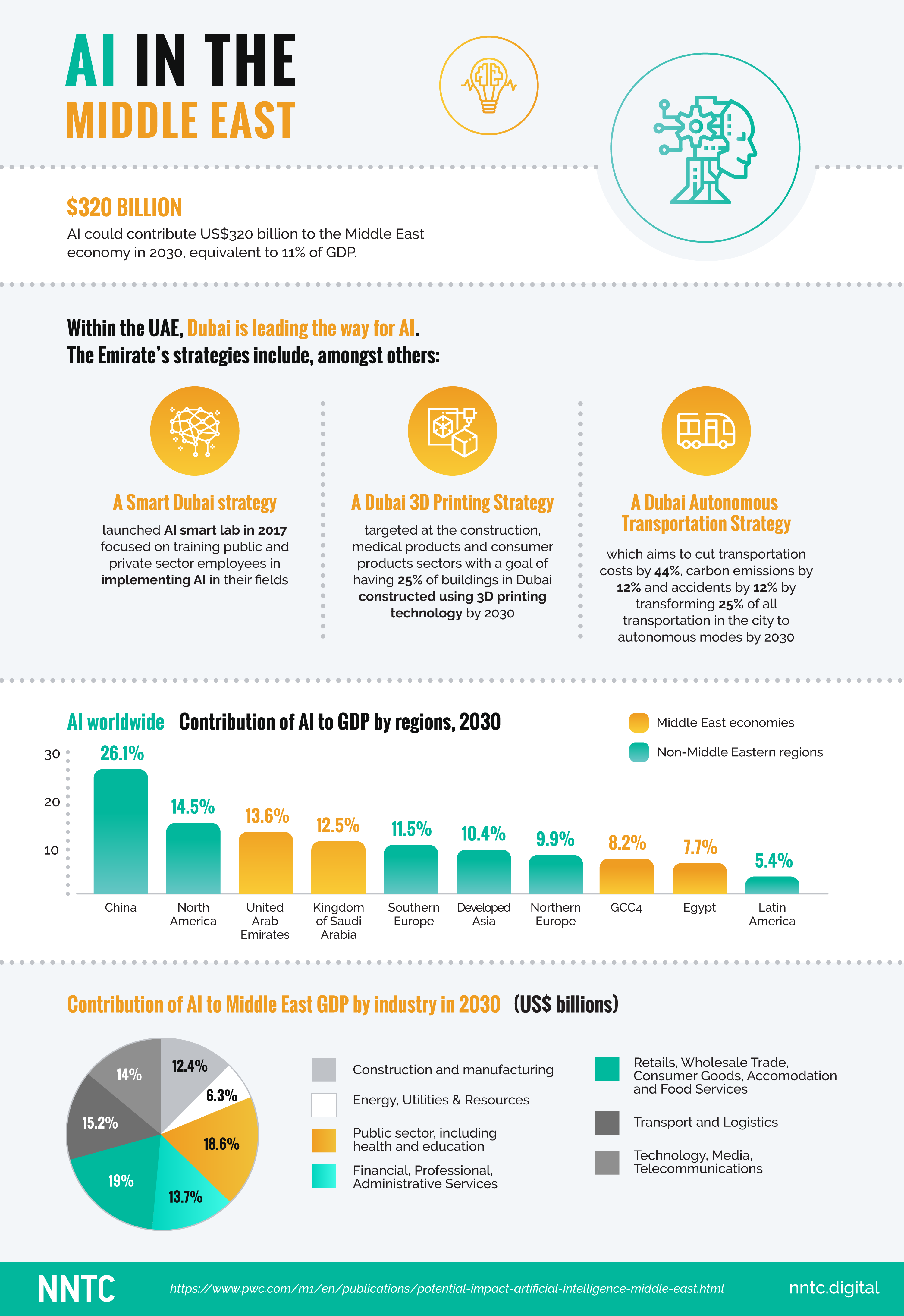

According to PwC Middle East research, the AI market in the Middle East will rise to $320 billion by 2030. There is a strong focus on RPA of customer care services: in 2017, the Dubai Electricity and Water Authority (DEWA) announced recruitment of five robots to its Customer Happiness Centres. Retailers also tend to engage robots. Pepper, a world famous robot, welcomes visitors at several UAE banks and salespoints, defines customer’s gender and provides recommendations for a retail demo or special offers in the store.

For robots, the retail sector is an ideal testing field and promising application area. Just look at numerous use cases, like automated store shelve control (merchandising, stock and price tag checks) using robotic carts with optical recognition module – a godsend for merchandisers, or cashier workload calculation based on video analytics to better schedule salesforce working time in a shopping space. And what about PoS self-service payment system powered by face recognition? Such a fare system was rolled out in Chinese subway and Amazon Go self-service stores so we can say for sure that it really works.

In warehouses, robots handle and sort products. Besides other things, Amazon is famous for its robotized warehouses, promptly processing millions of orders every day. Dozens of robotized auto-loaders, as little as a common vacuum cleaner, easily move heavy shelve stands with goods to operators who just take necessary items from the shelves. As a result, with a help of a robot, one operator does the job of 6-7 persons, with order batching becoming cheaper as well. With the routes determined in advance, robots avoid collisions and easily transport big shelve stands within a limited territory. Adler, a German clothing chain, leverages robots for warehouse inventory control. Every night, robots called Tory scan RFID tags on goods and generate stock reports.

However, robots cannot replace all sales personnel when it comes to a bit more sophisticated tasks, as we can see from Amazon’s experiment with robotized stores. The company tested its first Amazon Go stores back in 2016, with 10 such stores currently operating in the U.S. No cash desks, cashiers, consultants or queues there. At the entrance, shoppers scan their Amazon Accounts and at once find themselves among smart sensors. The system tracks what goods shoppers take from shelves and how they move across the store, and automatically debits the necessary sum from the buyer’s account once they exit the supermarket. Despite the marvelous idea and company’s attempts to improve the system, the stores regularly face difficulties as tracking more than 20 people moving across the store turned out to be a real challenge for the cameras. If a shopper takes an item, reads a tag and then puts the item back, the system can lose sight of this item because of a changed location. In addition, you’ll hardly find alcohol in such a store because alcohol sales require shopper age identification.

AI improvement and education efforts may take a long time before innovators can introduce new generation stores operating without cashiers or other personnel. To address this challenge to a certain degree, we have developed an unusual motion detection (UMD) solution to identify shoppers’ atypical behavior. Here, the first implementation stage is all about UMD solution continuous learning based on real-life customer data, taking up to two weeks. The system collects data from video cameras and, after learning, can quickly detect atypical behaviors: a person is running instead of walking, stops where shoppers usually do not stop, zips a coat too slowly, carries a big backpack in his hand, or wears baggy clothes.

Another challenge for RPA adoption in the retail sector relates to vulnerabilities and hacking attempts – something both competitors and tech-savvy cybercriminals may exploit or opt for. Moreover, any Internet-connected system is exposed to DDoS attacks. Yes, it is. And robots as any artificial Intelligence tools, are exposed too. Modern RPA systems employ advanced security technologies resulting from an ever-lasting competition between cybercriminals and corporate white hats. Furthermore, when it comes to software robots, they are made fault-tolerant and definitely have proper and reliable protection, thus being a good choice for any retailer. Opportunities always go hand in hand with risks and it is an innovator’s responsibility to prudently assess such risk-to-opportunity balance and adopt the best possible approach using verified data and battle-proven technology.

In this infographic you will find the best statistics about artificial intelligence in the Middle East and find some quality insights for your business.

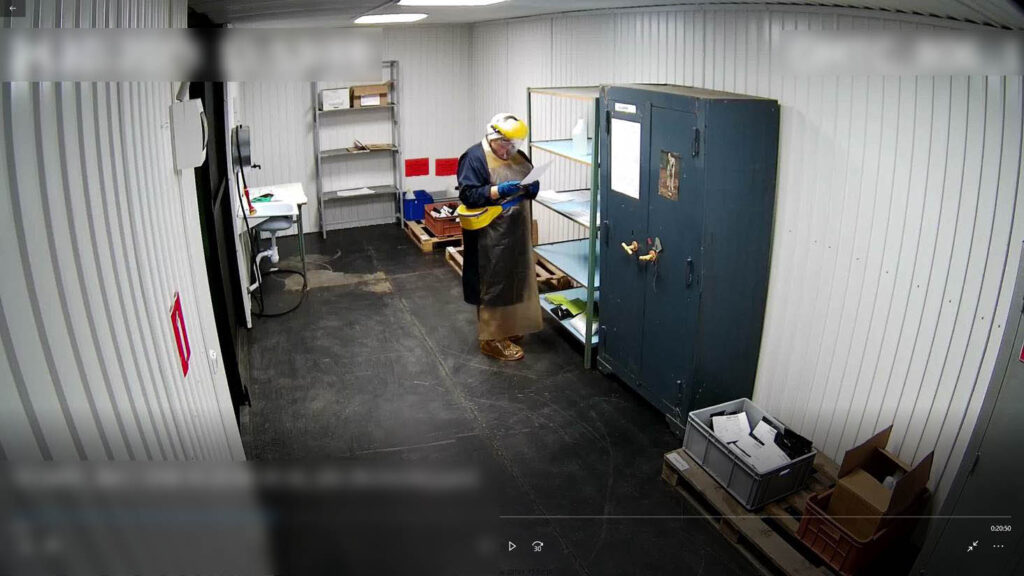

A health, safety & environmental (HSE) training in virtual environment helps employees address sophisticated issues in a more effective way, ensures maximum involvement in the production process, and allows for emergency drills being difficult to conduct in reality.

Should it be high voltage equipment failure or fire at the production facility, VR safety training helps reveal employee reaction to any emergency or sudden obstacles, reproduce tricky situations when an incident doesn’t follow the usual scenario, and, more importantly, enables employees to get prepared to such incidents in advance. For example, the introduction of VR in Ford’s manufacturing process reduced injures by 70%.

VR TRAINING

VR training is a virtual reality app developed in line with an emergency response procedure and staff training methodology as prepared by the specialists of the customer’s training center. During such VR trainings, employees obtain necessary knowledge and skills and pass tests in a computer simulation, with the test results being available for the supervisor who thus can speed up onboarding and new knowledge perception through practice.

Any hazardous situation or emergency, such as accidents on electrical distribution networks, can be easily reproduced in a computer simulation, with virtual reality technologies being capable of showing almost any content, including even “playback” of past accidents to prevent them in the future.

The consequences of any HSE breach can be reinforced in virtual reality through visual effects, like a full-screen explosion accompanied by bruising, thus impacting psycho-emotional state of employees to make them be more careful in their routine activities.

VR TRAINING FORMATS

VR training formats may differ depending on a particular task, each having certain advantages and limitations.

VR trainings can be tailored to various platforms: PC, VR glasses and headsets, industrial VR systems or multi-user devices with VR support. Each scenario differs in terms of user immersion, content adaptability for mobile use, and the quality of graphics.

Augmented reality 3D apps for smartphones and tablets can be used almost anywhere; however, the mobility advantage is balanced by rather poor quality of graphics.

Training on desktops (PCs) and laptops boasts the highest possible quality of graphics with an average level of immersion and mobility and is the most common format used by corporate training centers today.

VR tools are usually divided into:

Each format has its advantages and limitations. Thus, mobile VR has a high level of mobility, as users need just a smartphone or VR glasses themselves, and a high degree of immersion, since employee’s peripheral vision is not distracted. Both elements easily fit in a backpack, medium-sized bag or a classic briefcase. As a result, coaches are not tied to a specific training location and can easily conduct field demonstrations almost anywhere.

However, VR glasses do not support physical movement, with all content being perceived just from one point in a 360-degree format.

VR systems (HMD) offer the best possible graphics and the ability to move within a space of nine square meters, thus making it possible to get oneself truly immersed into virtual reality and interact with the surrounding virtual space using joysticks.

This format can be considered mobile as well because the equipment itself does not take much space and is not very heavy, but installation and configuration takes time and effort. This tool is perfect for classrooms at corporate training centers. In addition, a multiplayer solution is available for collective training where users can see avatars of each other, communicate and perform collective actions in a virtual world, thus achieving the maximum degree of immersion.

Is there a way to make sure that workers actually wear and use personal protective equipment (PPE) at hazardous production facilities? No big deal. Just train a neural network to recognize if PPE is on workers all over the facility, and then prevent accidents. How to do so? That’s what our today’s post is about.

Is standard CCTV system effective? There are ordinary people behind the cameras, getting tired, distracted, having momentary lapses in concentration that could prove fatal for workshop personnel. The good news is that progress comes to save the day. A properly trained artificial intelligence detects safety practice violations at once and in no time alerts a worker, one way or another, e.g. with a sharp sound, vibration, or SMS.

We trained AI-based video analytics to monitor safety compliance in the workplace in multiple ways:

We called this platform for workplace accident prevention “Digital Worker“, and its video analytics tool can detect:

How does a Digital Worker IoT platform count people and know a worker’s job? It does so by means of digital differentiation, capable of seeing the color of safety wear or hard hat on a worker as one of the ways. Let’s take a drilling site for example, where each hard hat color depends on the job. Blue hard hats are worn by drilling engineers, green is found on heads of drilling supervisors, and so on. The site can have color video cameras. The number of hard hats counted in real time is equal to the number of workers. Each camera covers a specific area of a drilling site. At the end of the day, all this data is consolidated to make a schedule for every area, showing what workers did the job, how many of them and in what area.

The system recognizes dangerous situations and notifies the operator about them. So, it is forbidden to be near the drilling rig when it is in working condition. In case a worker comes closer to the forbidden area, camera notices it and sends an alert.

The system also reacts when foreign objects prevent machinery operation. This picture shows how an animal is detected on the production line:

Can you trick a neural network? Let’s say, a worker fastens a hard hat to a belt. What is going to happen? In the case like this, two detectors are involved to find a bone structure, check whether a color spot is on top of it, and look for objects that move in sync. Therefore, a hard hat on the belt will hardly “look around” a person, because to do so, it should be on the head that moves in a certain way. Such a move can be easily detected, so tricksters are caught on their way to break rules.

What else can a neural network see?

Digital Worker platform is a mix of IoT and AI technologies and tools for industrial enterprises and construction companies developed to ensure employee safety and improve performance with a focus on minimizing the number of accidents, preventing fraud and safety violations.

People lie. Statistically, any person of 18 to 44 tells a lie at least twice every 24 hours. Sad but true: some lies can endanger people around. Thus, let us dive deep into innovations that successfully detect deception around the world.

One of the best places to implement such technologies is airport. Automated Virtual Agent for Truth Assessments in Real-Time, or AVATAR, which is basically a lie-detecting computer kiosk system, is installed in some international airports of the USA, Canada, and the European Union. A 3D avatar of a customs officer appears on a terminal’s screen and merely asks travelers a few questions, while the system assesses a respondent and reveals deceptive or risky behaviors:

According to Aaron Elkins, a professor at the San Diego State University, the AVATAR as a deception-detection judge has a success rate of 60% to 75% and sometimes up to 80%. “Generally, the accuracy of humans as judges is about 54% to 60% at the most,” he said.

Yet another solution was developed by Converus (Lehi, Utah) claiming that their EyeDetect is the most accurate lie detector available. A computer runs this software and a specialized camera captures eye movement of the person being tested. During a 30-minute true/false test, the camera captures changes in pupillary response, eye movement, blinking, staring, and makes other assessments to evaluate how honest a respondent is. The system’s algorithm makes 60 measurements per second, which is about 180,000 per test. The Department of State recently paid Converus $25,000 to use EyeDetect when vetting local hires at the US Embassy in Guatemala, WIRED’s reporting revealed.

iCognative suggests another vision on such case: their technology detects if any specific information is stored in the brain by measuring the brainwaves. Wireless headset is placed on a person’s head – it uses sensors that collect brain responses from the scalp and muscle movements. The person goes through a test with special triggers (words, phases or pictures), that form association and provoke reaction from the brain. The iCognitive software analyzes ECG signals and determine whether information under test is present or absent.

Innovative technologies in deception detection can make a difference: more crimes cleared, offenders caught, and which is no less important, fewer violence attempts in the future. If you are interested in implementing AI for security or other purposes, contact NNTC and we will provide all the information and help.

Gartner, Inc., a research and advisory company, has revealed a list of technology trends that will have an impact on businesses in 2019. Here are the most interesting of them – we are sharing five out of ten technology trends highlighted by Gartner analysts David Cearley and Brian Burke (Top 10 Strategic Technology Trends for 2019, published 15/10/18).

Autonomous Things

Autonomous things, i.e. robots, drones, vehicles, appliances, and agents, use AI to automate functions previously performed by humans. Experts believe that interaction of autonomous devices will be the main path for AI development.

Augmented Analytics

Augmented analytics enables the testing of a broad range of hypotheses, thus opening up new data processing and analysis opportunities. Moreover, automated insights of augmented analytics can be embedded into enterprise applications to optimize decisions and actions of all employees. Augmented analytics includes:

AI-Driven Development

New platforms will allow application developers to integrate AI capabilities and models into a solution without the help of professional data scientists.

Tools used to build AI-powered solutions (AI platforms and services) are expanding to target not only data scientists, but also the community of professional developers. These tools are, in turn, being empowered with AI-driven capabilities that help professional developers and automate tasks related to the development of AI-enhanced solutions.

AI-enabled tools in particular are evolving from assisting in and automating of application development functions to automating more sophisticated business-domain processes (from general development to business solution design).

Digital Twins

A digital twin refers to the digital representation of a real-world entity, process or system. Separate digital twins can interconnect to form more complex and larger systems. They are mainly used in Internet of Things (IoT) to: monitor system health, show new ways to improve efficiency, and help develop new technologies and services. Experts say that, at the next stage of technology evolution, enterprises will be implementing digital twins of their entire organizations (so called DTOs).

NNTC also created a digital twin for a massive enterprise. In 2018, we have launched our “Digital Worker” safety platform. The platform shows “the big picture” of the enterprise by combining multiple events (3D format included) registered by any systems: video analytics, industrial and wearable IoT, access control, SCADA, and other systems.

Smart Spaces

A smart space is a physical or digital environment in which humans interact with technology-enabled systems and which evolves across five key dimensions: openness, connectedness, coordination, intelligence, and scope. According to David Cearley, a Gartner analyst, this trend has been coalescing for some time around elements such as smart cities, digital workplaces, smart homes, and connected factories.

*Source: Gartner Identifies the Top 10 Strategic Technology Trends for 2019

Artificial intelligence (AI), a sharp uptrend from 2018, keeps running high this year. However, many people are still wary of it. No matter how great the scientific innovations are, there are very common myths about the AI, and it is indeed high time to take a close, fresh look at the most popular ones. So let’s bust them.

AI: Much More Than Just a Robot

Pop culture actively promotes AI as android robots (Blade Runner; I, Robot; Chappie; Detroit: Become Human; Star Trek, etc.). Robots are truly an outstanding example of what AI can be. Just think of those running, jumping and dancing bad boys made by Boston Dynamics or Pepper robots, famous welcoming assistants. However, AI goes far beyond this, and you encounter this technology even more often than you can imagine.

For example, artificial intelligence recognizes and processes pictures you take on your phone. Surely, more megapixels mean better picture, but the market is taken by the companies whose powerful phones are accompanied by strong processing algorithms (i.e. color correction, white balance, zoom, and blur background), quality of which directly depends on artificial intelligence performance.

Another example of AI application is health service. A neural network by Third Opinion analyzes patient’s medical data (X-rays, ultrasound scans, MRI, blood test results) and detects malconditions as good as medical professionals do. The AI watches over us: a video analysis system by VisionLabs with a help of NNTC identifies individuals in public places, finds matches with the wanted person database, and promptly alerts security service.

Last but not least, AI drives science development. On April 10, 2019, an AI algorithm developed by Katie Bouman, an MIT graduate, enabled scientists to present the first ever black hole image. She created the algorithm for visualizing data from telescopes around the world that followed a black hole, with imaging now achieving angular resolutions as fine as 10 microarcsec.

AI Will Not Take Your Job

Robots will never completely replace humans, that’s for sure, since feelings, emotions, and critical thinking are all beyond machines’ capability. However, robots excel at calculations and simple tasks – no hard choices or emotional investing.

As a rule, AI can perfectly handle one particular task only, like the one that plays chess – it does nothing but plays chess, though this AI is at the top of this game. But if you ask it to distinguish between a kitten and a puppy in the picture, you will see an epic fail of this AI.

Lacking emotional investment, which is synonymous with art professions and inherent in service and teaching, artificial intelligence has absolutely no clue how to act in critical situations when time is running out and critical thinking is a must. AI needs human support and supervision, so it cannot act without guidance yet.

How can drones help fight illegal fishing? Let’s discuss the most common use case.

To detect and track illegal fishing vessels in the sea, a drone has to cover the largest possible area during one flight. In addition, drones should carry cameras to take photos and videos, powerful transmitters to stream video to a command center, and high-capacity batteries to ensure long operation.

Today, responsible authorities use long-range fixed-wing aircraft, which meets the above requirements, but at the cost of convenience and flexibility:

To overcome the above limitations, while keeping the required drone range, VTOL aircraft can be used. Recently, the market saw a lot of announcements of VTOL aircrafts ranging from small 1.5 m wingspan to larger 4 m ones.

Such aircrafts uses two major designs: a) tilt rotors, where the same motors and propellers are used for take-off and landing, and b) aircrafts that use propellers for horizontal flight and dedicated VTOL rotors for take-off and landing. Dedicated VTOL propellers are easier to build and maintain, however, they create additional drag during horizontal flight, thus reducing aircraft flight range. On the other hand, tilt rotors require additional mechanics to operate.

Overall, despite above trade-off, VTOL aircrafts can greatly increase the efficiency of patrolling operations in illegal fishing fighting mainly due to possibility to change patrolling areas easily.